IaC and cloud provisioning

HCP Terraform provides you with a robust and efficient cloud provisioning workflow. The choice of workflow is important, and changing the workflow later requires engineering effort. The high level points required to implement a cloud provisioning workflow are as follows.

- Plan your project, covering installation and configuration of HCP Terraform or Terraform Enterprise, and how you intend to provide self-service capability to your internal customers. The Terraform Operating Guide: Standardization HVD details this in more depth. Consideration now of how you onboard, and work with, early adopter teams, and later how to onboard gradually larger groups of teams until the platform is generally available saves a lot of time and effort.

- Consider the size and bandwidth of your platform team. Consider what would happen to their workload if any of the onboarding experience of your internal customers was not fully automated.

- Plan a landing zone provisioning workflow.

- Ensure your platform team is fully trained on IaC with Terraform and the cloud provider(s) you are using. Expect all contributors to read, understand, and adhere to the HashiCorp Terraform language style guide(opens in new tab). Use your documentation to guide all developers to write Terraform code that is compliant with this style guide.

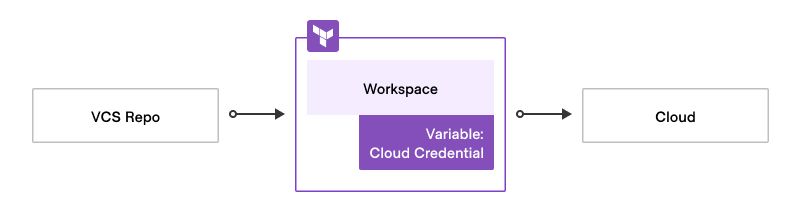

- Set up a Terraform Stack or workspace with cloud credentials.

- Store your Terraform code in your strategic version control system.

- Provision cloud resources from the IaC in the VCS using the Stack or workspace.

- Consider the configuration required for HCP Terraform to enable the deployment end-to-end in the context of your organization's security and compliance requirements. Revisit each step in the workflow, and consider the requirements in terms of engineering content and effort, and focus on the manual steps and what you would need to do to automate each step. Also consider what would be necessary in a disaster recovery scenario.

- If you intend for your deployment to scale to a large number of teams, ensure to read the respective HVDs in this series.

The landing zone provisioning workflow is a crucial process that you implement for your application or development teams to ensure infrastructure provisioning in the appropriate landing zones. Each cloud platform has its own recommendations for implementing these landing zones, and for Kubernetes-based teams, a Kubernetes namespace can also serve as a component of a landing zone.

To achieve end-to-end cloud provisioning, developers commit Terraform code to the designated repository, triggering a plan in HCP Terraform which uses cloud credentials to complete the plan phase. Once approved, plan/apply the Stack or workspace(s), provisioning the desired resources in the cloud.

The platform team owns and implements a landing zone workflow, partnering the networking, security, and finance teams as necessary, adhering to the guidance provided by the cloud vendors' well-architected framework. Do this before any application teams can initiate infrastructure provisioning in the cloud.

Landing zones

A cloud landing zone is a foundational concept in cloud computing and a common deployment pattern for scaled customers. Defined as a standardized environment deployed in a cloud to facilitate secure, scalable, and efficient cloud operations, it forms a blueprint for setting up workloads in the cloud and serves as a launching pad for broader cloud adoption.

Cloud landing zones address several important areas:

- Networking: Configured with the network resources, such as virtual private clouds, subnets, and connectivity settings needed for operating workloads in the cloud.

- Identity and access management: Helps to enforce IAM policies, RBAC, and other permissions settings.

- Security and compliance: Security configurations such as encryption settings, security group rules, and logging settings.

- Operations: This includes operational tools and services, such as monitoring, logging, and automation tools. These help to manage and optimize cloud resources effectively.

- Cost management: Enables cost monitoring and optimization by facilitating automation, tagging policies, budget alerts, and cost reporting tools.

Major cloud providers offer their own solutions for this approach:

- AWS Control Tower

- Azure Landing Zone Accelerator

- Cloud Foundation Toolkit for GCP.

Landing zones are commonly deployed by HCP Terraform, but the landing zone concept is also applicable to HCP Terraform. The platform team deploys a control workspace during the onboarding of an internal customer using automation and which is then used to deploy the operational environment needed by that customer to support their development remit. This is akin to the equivalent CSP account and configuration needed by the same team. The recommended way to do this is as follows.

- Complete preparative reading: Read about cloud landing zone patterns applicable to the cloud service providers with whom you partner.

- Define the HCP Terraform landing zone: There are a number of ways to apply the LZ concept, but core requirements include:

- Use of the Terraform Enterprise provider(opens in new tab) so that there is state representation for the configuration - this applies to both HCP Terraform and Terraform Enterprise.

- Definition of a VCS template which contains boilerplate Terraform code for the LZ and a directory structure designed and managed by the platform team.

- Creation of a Terraform module for your private registry - The platform team calls this through its automated onboarding process when an application team wants to onboard. This module nominally creates the following:

- A control workspace for the application team.

- A VCS repository for the application team to store the code which runs in the control workspace.

- Variables/sets as needed for efficient deployment.

- Public and private cloud resources for the team to use as necessary.

- Augment your onboarding pipeline to make use of the module. Hook the process into other organizational platforms for credential generation and observability and so on.

- Dedicate a HCP Terraform project to house the landing zone control workspaces separately from others the platform team own. Use the Terraform Enterprise provider or HCP Terraform API to deploy this. The onboarding process directs creation of control workspaces into this project.

- Test: Create landing zones using the process.

- Update the module and VCS template: Expect to do this a number of times to refine the process as you scale. Do not underestimate this key area. Depending on the onboarding design, if you onboard ten internal customers using the process, then need to change the process, this applies from that point on for the next set of customers. Depending on the implementation, you may need retrospective updates to refine the pipeline. This is also why it is important to funnel sets of early adopters onto the platform as you develop the solution using stepwise refinement.

- Ensure smooth onboarding: Work to ensure the onboarding process is as smooth as possible for the application teams. If using ServiceNow as front door to the onboarding process, liaise with the ServiceNow team as early as possible as we find there is always a lead time for remediation.

Summary of landing zone workflow

- Ticket raised: Audit trail created, approval acquired as appropriate.

- Landing zone child module call code added: The top-level workspace and its VCS repository is where the platform team collect and manage onboarded teams.

- We recommend automating the addition of this child module call to ensure the solution is scalable. Use your strategic orchestration technology to manage this automation.

- If your business expects to onboard thousands of teams, then avoid performance issues with the workspace run as discussed at the end of the previous section by placing significant consideration on prior planning of the platform team landing zone deployment workspace(s).

- Run the top-level workspace: This creates the control workspace for the application team using the Terraform landing zone module.

- Application team control workspace created: The template VCS contains Terraform code to use child modules to create the application team's Stacks or workspaces. In a franchise model, the application team would have write access to this repository so that they can configure it to deploy all the required Stacks or workspaces to support their needs. In a service catalog model, run the control workspace to create the application team's Stacks or workspaces to hook into subsequent request processes for pre-canned infrastructure requests.

Workload identity

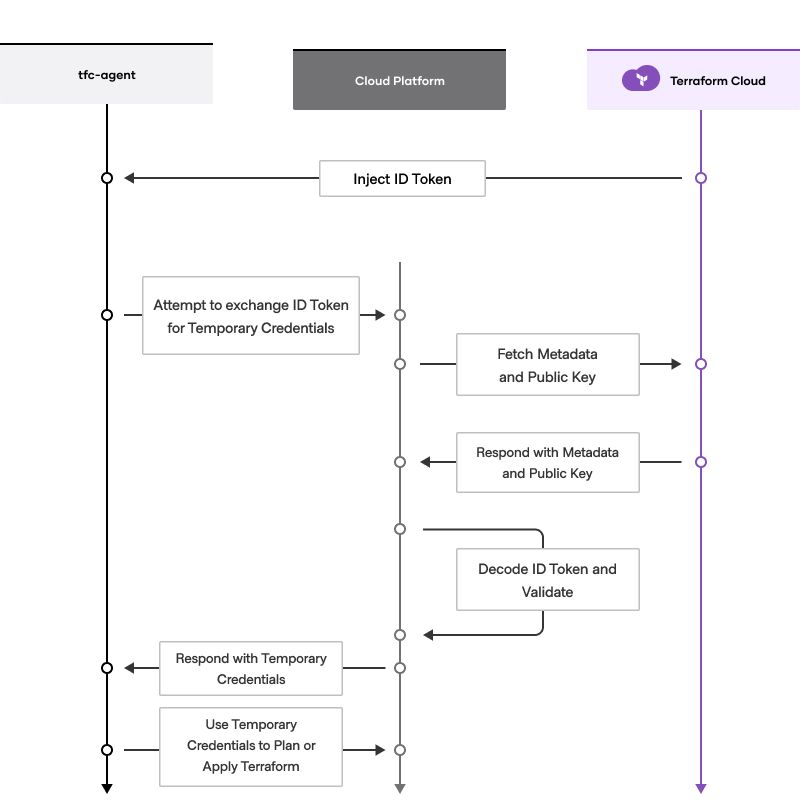

With dynamic credentials, users are able to authenticate their Vault providers using OpenID Connect (OIDC) compliant identity tokens to provide just-in-time credentials and eliminate the need to store long lived secrets on HCP Terraform/Enterprise. Some of the benefits of workload identity include the following.

- No more cloud credentials: Removes the need to store long-lived cloud credentials in HCP Terraform. Temporary cloud credentials are instead retrieved either from the CSP or using Vault on the fly.

- No more secret rotation logic: Credentials issued by cloud platform are temporary, removing the need to rotate secrets.

- Granular permissions control: Allows for using authentication and authorization tools from the cloud service provider to scope permissions based on HCP Terraform metadata such as organization, project, Stack or workspace, and run phase.

We recommend the implementation of workload identity to ensure that your cloud provisioning workflow adopts the most secure posture possible at all times. We expect you likely start by using fixed cloud credentials to authenticate your provider configuration. Include workflow identity in your plans as the project proceeds and ensure the concept is well-understood and fully implemented prior to production deployment.